Call for Submissions

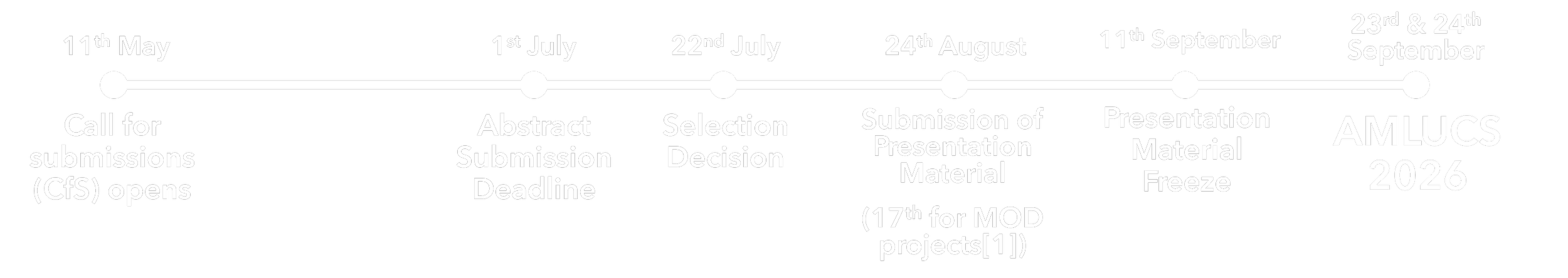

Key Dates

Please note these deadlines are fixed - extensions are not planned.

[1] MOD-funded research is required to follow the Permission to Publish (P2P) process. The AMLUCS team will assist you with this.

Application Process

-

We welcome submissions relating to, but not limited by, the following areas:

A) AI Security Threats, Vulnerabilities & Mitigations:

Agentic AI Threats in Practice

(e.g. Prompt injection, tool misuse, memory/identity abuse, multi-agent trust boundaries. Scope tightly to real/realistic attack surfaces - not theoretical agent design)

Real-World Incidents & Bug Bounties

(e.g. Writeups of AI-specific incidents, bug bounty findings, and near-miss case studies. Practitioner storytelling)

Practical Mitigations & Deployment Hardening

(e.g. What works in production: guardrails, architectural patterns, org-level controls. Priority given to evidence over theory)

Evaluation & Red-Teaming Methodology

(e.g. How to test AI systems beyond benchmarks — tooling demos, structured red-team approaches, evaluation frameworks in use)

Adversarial ML with Real Stakes

(e.g. Backdoors, model stealing, data poisoning - scoped strictly to deployed-system context. Abstract attacks without real-world grounding will be deprioritised)

Supply Chain & Model Integrity

(e.g. Provenance of weights, fine-tuning poisoning, third-party model risk. This is a fast-growing enterprise concern, which is underrepresented in the UK AI security community)

B) Agent Capabilities, Offensive/Defensive AI & Cyber Operations:

Offensive and Defensive Agent Capabilities

(e.g. Autonomous offensive/defensive architectures, AI-generated malware and evasion techniques, social engineering at scale, agentic deception, automated vulnerability detection and patching)

AI-Enabled Cyber Operations and Human-AI Teaming

(e.g. Human-AI teaming in cyber operations, agent-enabled cyberattacks, analysis of real-world attacks, AI-enabled forensics)

Agent Evaluation and Realistic Cyber Training Environments

(e.g. Cyber risk/capability benchmarking, environments for training and evaluating agents, reliable evaluation methodologies)

Security-Focussed AI Models and Methods

(e.g. Reinforcement learning, generative formal verification, security-focused foundation models)

C) Multi-Agent Systems, Architectures & Secure Design

Secure Architectures/Topologies and Defences

(e.g. Architectures that provide security guarantees, secure communication and co-ordination, defensive mechanisms, maintaining awareness of failure modes)

Practical Multi-Agent Security

(e.g. Emergent behaviours in the wild and their theoretical explanations, practical and effective defences, practical benchmarking and evaluation, threat modelling, human-multi-agent interaction)

Multi-Agent System Vulnerabilities

(e.g. Any multi-agent-novel vulnerabilities not covered by the sections above, including communication protocol vulnerabilities, other emergent behaviours, tool and environment vulnerabilities, system-level weaknesses)

D) AI Security Governance, Risk Assessment & Standards:

AI Security Risk Management

(e.g. Quantification of AI security risk, assessing mitigation effectiveness)

Application of AI security standards & frameworks

(e.g. Real-world experience & best practise in applying EN 304 223 / OWASP / NIST)

AI Security Governance Landscape and Challenges

(e.g. Dynamic AI security governance for agentic, governance and competitive challenges in regulated, CNI, and defence environments)

Monitoring & Evaluating Multi-Agentic environments.

(e.g. AI in the loop, scaling human oversight of security in multi-agentic environments)

E) Explainability, Interpretability & AI Fairness in Security Contexts:

Fairness by Design in Security Systems.

(e.g. Ensuring fairness with partial or missing user and threat data, operationalising ethical principles in security tooling, reconciling conflicting performance, risk and fairness metrics)

Advances in Explainability for Security

(e.g. Inherently interpretable cyber security models, understanding the causal factors behind automated security decisions, real-time analyst-centric explanations, explainability under adversarial pressure such as deception)

Applying Interpretability Techniques in Cyber Operations

(e.g. White box models and intrinsic interpretability, meta-reasoning for complex multi-stage security decisions, hybrid models for combining machine learning predictions with rules-based techniques and traditional methods)

Operationalising Tools in Safety-Critical Domains

(e.g. Guarding against cyber deception and misinterpretation, measuring real-world explanation quality and reliability)

If you feel your research is relevant but doesn’t align to these themes, please contact amlucs@fnc.co.uk with an outline of your idea and we can discuss.

-

Submissions are deliberately concise to encourage sight of leading-edge work without the demands of academic papers, etc. Submissions shall comprise:

Abstracts - summarising your research, its aims, benefits and key findings (max 500 words, excluding references)

Direct response to the four evaluation criteria (max. 100 words each):

Innovative & rigorous application of AI/ML methods (e.g. consideration of related work, benchmarking)

Real-world cyber security impact (practitioner-first: theory only welcome where it bridges directly to deployment)

Top 3 takeaways (can someone building or defending a real system walk away with something actionable?)

Quality and audience appeal (e.g. live walkthroughs, demos, case study narratives)

Links to codebase (to enable submission of leading-edge, commercial and sensitive work this is non-mandatory but is viewed favourably where possible.)

Metadata:

Submission type (20-min briefing, poster, or both)

Mapping to related conference theme(s) listed below.

Research funding route (e.g. Internal funding, customer X)

Permission to post your material to our website post-event (not mandatory)

Previous presentations, publications and submissions related to your AMLUCS submission (research submitted elsewhere is not excluded)

Generative AI Usage statement, covering the extent of GenAI usage, and validation checks applied.

If you have any questions about submitting or the conference please contact amlucs@fnc.co.uk.

-

The use of Generative AI tools is permitted to assist in writing or research. However, authors take full responsibility for all content in their submission, including any content generated by AI.

Tool demonstrations are permitted, but must provide full detail on the underlying technical approach. Sales pitches are not permitted.

Evaluation will be via double-blind review, do not provide identifiable information in your submission aside from the requested contact details.

Presentations must be in-person (a single complimentary ticket will be provided for selected submissions).

We have agreement from the Conference on Applied Machine Learning in Information Security (CAMLIS) that presentations submitted to AMLUCS can also be submitted to CAMLIS. If you are planning to do so please contact amlucs@fnc.co.uk.

-

Submit via MS Forms link.

Submissions will be evaluated by our Technical Committee and Review Panel against the criteria listed above, as well the contribution to presenter diversity.

The Technical Committee will review presentation material and may request changes to improve technical content, screen for excessive sales material, exaggerated claims, etc.